Digital video is now ubiquitous, in web-based video from youTube and Netflix, in IPTV from telephone companies, in "digital cable" from cable TV companies, as well as videoconferencing with Skype and Google Hangouts. Here are the key elements of digital video:

- 720x480 (US Standard Definition)

- 1280x720 (High Definition, HD)

- 1920x1080 ("Full HD", "True HD", "2K")

- 3840x2160 ("Ultra HD", "Quad Full HD", "4K")

- MPEG-2: 3+ Mb/s: DVD, DTH video

- H.264: 1/3 the bitrate of MPEG-2, better error recovery. Specified in MPEG-4 Part 10: mp4 files, BluRay discs, HD channels

- Analog or DS0 channels → IP packets

- Dedicated → ISDN → SIP

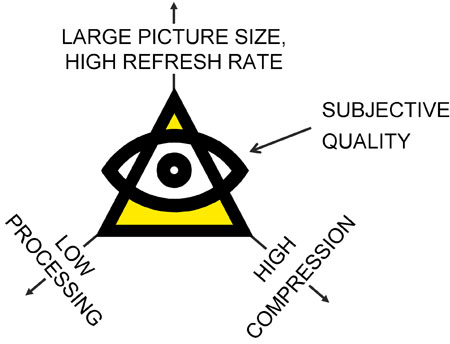

A number of factors affect the perceived or subjective quality of the images on the far-end user's screen. Aside from network issues like transmission error rate and variability of delay, the main factors are picture size and refresh rate, the number of bits per second required and the number of processing operations that must be performed per second to implement the coding and decoding in real time.

The objective is to transmit high-definition images using a low number of bits per second and achieving reconstructed picture quality people will be willing to use. However, these factors are often in conflict: for example, high compression requires intensive processing, and large picture size means a higher number of bits per second.

It is one thing to optimize two of the three factors; it is another to optimize all three at the same time.

Definition, measured in pels (pixels) is the correct terminology for picture size. The term "resolution" for picture size is not correct, as it refers to the quality of the image after reconstruction. They aren't "High Resolution" TVs, they are "High Definition" TVs...

Videophones and desktop videoconferencing systems have in the past supported the Common Interface Format (CIF) at 352x258 pixels… though that sounds antiquated compared to a 19200 x 1080 pixel display.

Standard Definition (SD) is 720x480 in the US, refreshed 30 times per second.

For historical reasons, the refresh is done in two passes, every odd-numbered line then every even-numbered line. This is referred to as 60 Hz interlaced. Also for historical reasons, there is enough time to draw 525 lines even though only 480 are drawn on the screen. This gave rise to a secret code to refer to US SD: SD/525 60i.

In the rest of the world, the definition is 720x576 since the screen is only refreshed 25 times per second (the number of lines per second is the same). The secret code: SD/625 50i.

There are a number of flavors of High Definition (HD), the two most popular currently being 1280x720 non-interlaced (progressive) "720p" and 1920x1080 either interlaced or progressive "1080i" or "1080p". Either can be refreshed 50 or 60 times per second.

Of course, this will never stop; there will continually be displays with more pixels. "4K" is 3840x2160. Sometime in the future, "4M" will be something like 3840000 x 2160000 pixels.

Compression is required to transmit or even store these images. 720x480 at 30 Hz, with one byte each for red, green and blue is 250 Mb/s. 1920x1080 at 60Hz is 3 Gb/s (!) which is more than most consumers can handle.

Compression is performed by an algorithm called a coder/decoder or codec. To operate in real time (at playing speed), codecs are usually implemented as highly optimized machine code on custom-built chips containing multiple Digital Signal Processors (DSPs).

Standards are required for interoperability. The Moving Picture Experts Group (MPEG) and the ITU establish standards in this area. MPEG-1 was for video on CDs, with the video coded at 1.15 Mb/s. This was replaced with MPEG-2, which offers a wide range of coding and compression options, grouped in profiles. Each profile supports a certain picture size, definition, refresh rate and image quality, and results in a different average bit rate. MPEG-2 is currently used as the basis for video stored on Digital Versatile Disks (DVDs) and transmitted via Direct to Home (DTH) video services.

With the advent of HD, a coding algorithm standardized as ITU H.264 has emerged. It provides the same quality as MPEG-2 at 1/3 the bit rate with better tolerance to transmission errors. This has replaced MPEG-2 on BluRay discs and HD broadcasts. H.264 is specified in Part 10 of the MPEG-4 standard, so files often have the .mp4 type.

Network services used for video are changing from dedicated channels to IP packets. For videoconferencing and videophones, the call setup standards have evolved from DS0 channels (ISDN) to H.323's H.225/245 (NetMeeting) to the Session Initiation Protocol (SIP). SIP appears to have gained critical mass and will be used to set up all sessions in standards-based systems.